When you deploy a Tanzu Kubernetes Grid (TKG) Cluster using the integrated TKG Service in vSphere with Tanzu, you can specify a Virtual Machine Class Type which determines the amount of CPU and Memory resources that are allocated for both the Control Plane and/or Worker Node VMs for your TKG Cluster.

Here is a sample YAML specification that uses the best-effort-xsmall VM class type for both Control Plane and Worker Node, but you can certainly override and choose different classes based on your requirements.

apiVersion: run.tanzu.vmware.com/v1alpha1

kind: TanzuKubernetesCluster

metadata:

name: william-tkc-01

namespace: primp-industries

spec:

distribution:

version: v1.17.8+vmware.1-tkg.1.5417466

settings:

network:

cni:

name: antrea

pods:

cidrBlocks:

- 193.0.2.0/16

serviceDomain: managedcluster.local

services:

cidrBlocks:

- 195.51.100.0/12

topology:

controlPlane:

class: best-effort-xsmall

count: 1

storageClass: vsan-default-storage-policy

workers:

class: best-effort-xsmall

count: 3

storageClass: vsan-default-storage-policy

Today, the are a total of 16 VM Class types that you can select from, however these are not customizable which is something that has been coming up more recently. The vSphere with Tanzu team is aware of this request and is working on a solution that not only makes customizing CPU and Memory easier but also supporting storage customization. As you can see from the table below, 16GB is only supported configuration today.

In the mean time, if you need a supported path for customizing your TKG Guest Clusters, one option is to use the TKG Standalone / MultiCloud CLI, which can be used with a vSphere with Tanzu Cluster. You will need to deploy an additional TKG Management Cluster (basically a few VMs), but once you have that, you can override CPU, Memory and Storage of both the Control Plane and Worker Nodes using the following environment variables:

- VSPHERE_WORKER_NUM_CPUS

- VSPHERE_WORKER_MEM_MIB

- VSPHERE_WORKER_DISK_GIB

- VSPHERE_CONTROL_PLANE_NUM_CPUS

- VSPHERE_CONTROL_PLANE_MEM_MIB

- VSPHERE_CONTROL_PLANE_DISK_GIB

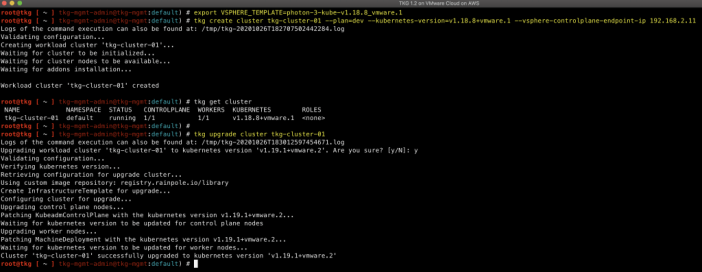

If you are interested, the easiest way to get started is by using my TKG Demo Appliance Fling which was just recently updated to the latest TKG 1.2 release which has support for K8s v1.19 which is currently not available on vSphere with Tanzu.

Now, you might ask, would it be possible to create your own custom VM class types using vSphere with Tanzu? Well .... keep reading to find out 🙂

Disclaimer: This is not officially supported by VMware, use at your own risk. These custom changes can potentially impact upgrades or automatically be reverted upon the next update or upgrade. You have been warned.