After publishing my Nested ESXi enhancements for vSphere 6.5 article, I had received a number of questions on whether the new Virtual NVMe (vNVMe) capability introduced in the upcoming vSphere 6.5 release would also work with a Nested ESXi VM? The answer is yes, similiar to PVSCSI and VMXNET3, we also have an NVMe driver for ESXi running in VM.

Disclaimer: Nested ESXi and Nested Virtualization is not officially supported by VMware, please use at your own risk.

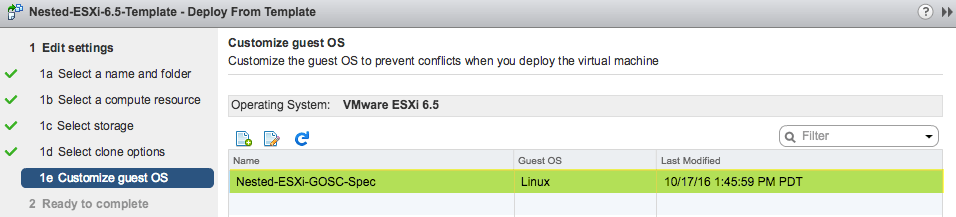

To consume the new vNVMe for a Nested ESXi VM, you will need to use the latest ESXi 6.5 and later compatibility (vHW 13). Once that has been done, you can then add the a new NVMe Controller to your Nested ESXi VM and then assign that to one of the virtual disks as shown in the screenshot below.

Next, you would install ESXi 6.5 as you normally would and the NVMe controller will automatically be detected and driver will be loaded. In the example below, you can see I only have a single disk which ESXi itself is installed on and it is backed by the NVMe Controller.

One of the biggest benefit of using an NVMe interface over the traditional SCSI is that it can significantly reduce the amount of overhead compared to the SCSI protocol which in turn consumes less CPU cycles as well as reducing the overall amount of IO latency for your VM workloads. Obviously, when using it inside of a Nested ESXi VM, YMMV but hopefully you should also see an improvement there as well. For those who plan to give this a try in their environment, it would be good to hear what type of use cases you might have in mind for this and if you have any feedback (good/bad), feel free to leave a comment.