I first came to learn about Supermicro's E100-9W platform last year, which I had first written about here. The E100-9W is a fanless kit that is part of Supermicro's Embedded IoT family and targets similiar use cases to the Intel NUC such as Industrial Automation, Retail, Smart Medical Systems, Kiosks and Digital Signage. Although the E100-9W was just released in 2020, it was actually using a much older Intel 8th Generation CPU due to some constraints with Intel's embedded CPU roadmap.

Supermicro did mention last year that a Tiger Lake-based model was in the works and last week, I just got my hands on a pre-production unit for their 2nd generation of this platform called the E100-12T.

Models

The E100-12T is expected to be available in Q1 of 2022 and will include three models across i3, i5 & i7 CPUs. Currently there is no pricing and will be something you need to check within your region and local vendor for more details when this system is available for purchase.

Hardware Specs

At first glance, you will notice that the E100-12T chassis is exactly the same as the previous E100-9W. Internally, there are a few changes in addition to the updated Tiger Lake CPU including support for PCIe Gen4, dual 2.5GbE, GT2 Xe Graphics and the addition of USB 3.2 & USB-C ports. For those familiar with the Intel NUC, these specs are pretty similiar to the recent 11th Gen Panther & Tiger Canyon NUCs which also uses Tiger Lake CPUs.

- 64GB SO-DIMM (DDR4-2400)

- 1 x M.2 (2242/2280), PCIe Gen3 NVME or SATA3

- 1 x M.2 (2242/2280), PCIe Gen4 NVME

- 2 x 2.5GbE onboard NIC

- TigerLake-LP GT2 (Iris Xe Graphics)

- 1 x USB-C (USB 3.2)

- 3 x USB 3.2

- 4 x USB 2.0

The internals is pretty much the same as the E100-9W. If the COM ports were removed, the chassis probably could be smaller or perhaps additional capabilities like support for additional storage (M.2) would certainly be handy for VMware setup.

ESXi

Since the E100-12T also uses a Tiger Lake CPU like the 11th Gen Intel NUC, which requires the Community Networking Driver for ESXi Fling to enable the onboard 2.5GbE NIC, I was surprised to see that the NICs were actually NOT detected by that driver. Upon closer inspection, because the E100-12T uses an embedded CPU rather than mobile CPU from Intel, the network adapters were slightly different. The E100-12T has a pair of Intel I225-IT(2), which were not supported by the existing Community Networking Driver for ESXi.

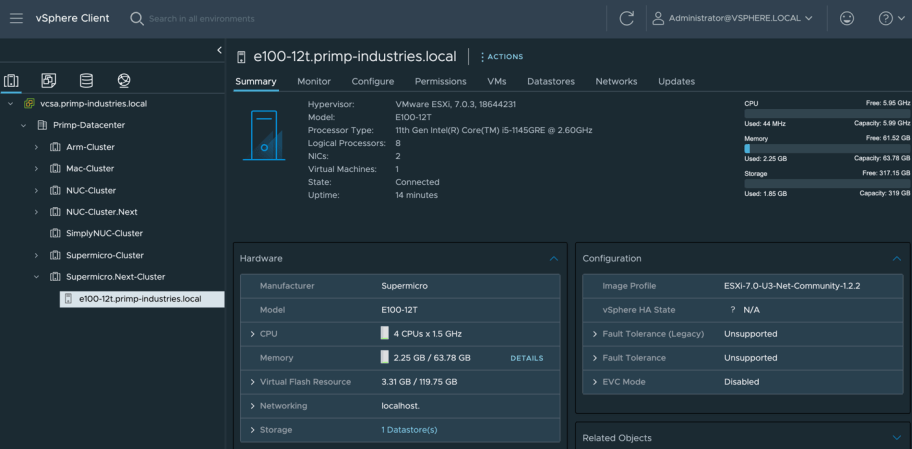

Thanks to our Engineering team, we were able to quickly enable this device and last week we released v1.2.2 of the Community Networking Driver for ESXi which will be required when installing ESXi on E100-12T. Once the driver was incorporated into a custom ESXi image, installation went without a hitch. As you can see in the screenshot below, I was able to run the latest ESXi 7.0 Update 3 release without any issues.

I also noticed that E100-12T included a newer TigerLake-LP GT2 (Iris Xe Graphics) iGPU and I was also curious on whether this Supermicro platform would also be affected by the passthrough issue seen with the Inte NUCs and sadly, the same issue exists. I suspect the passthrough issue is probably an issue/defect with the Tiger Lake CPU as this was never a problem with prior CPU generations for iGPU passthrough.

Overall, the E100-12T is a great kit for anyone in the market for a small and quiet (fanless) VMware Homelab system with the latest Tiger Lake CPUs. The upgraded storage and networking from the E100-9W makes the E100-12T a modern candidate for those looking at Intel NUC alternatives. My only minor complaint about this platform is that I wish the physical IO ports included Thunderbolt 4, rather than just USB-C, especially since the CPU supports it. The main reason for this is that with the upcoming removal for SD Card/USB support for ESXi, the availability of a Thunderbolt 4 port would allow customers to easily add additional storage and/or networking, similiar to that of the Intel NUCs. Alternatively, support for another M.2 storage device within the motherboard would also suffice, especially given the form factor is slightly larger than an Intel NUC, it would certainly be a welcome addition for ESXi boot volume and then the two additional M.2 could then be used exclusively for vSAN.

Thanks for the comment!