The Intel Xeon D embedded SoC product line is an amazing platform and has been extremely popular amongst VMware customers for VMware Homelabs and running workloads both in the Datacenter and at the Edge using kits like the Supermicro E200-8D and E300-9D to just name a few. While the E200-8D/E300-9D still remain popular, they are slowly coming up on their end of life support, Q1 2023 and Q1 2025 respectively.

Intel Ice Lake D is the first major architectural refresh of the Intel Xeon D product line, which is quite exciting for those looking to upgrade and take advantage of all the latest hardware capabilities this new platform as to offer! Two Ice Lake D kits that I am really excited for is the Supermicro E300-12D and E302-12D, both of which leverage the new Ice Lake D SoC. As a E200-8D owner, my only real nit pick is with the noise of the fans which can sound like a jet engine when using the stock fans. Although I have replaced the stock fans with the much quieter Noctua fans, under higher load the system is still audible and some in the community have also reported that it may still not be enough to cool system.

If I had to start all over again, I certainly would be looking at one of the new fanless kit from Supermicro! I was fortunate enough to get my hands on the upcoming Supermicro E302-12D system, a fanless Ice Lake D kit which has a similiar design to the existing E300-9D.

So here is your first sneak peak at this new Ice Lake D platform! 😀

Compute

The E302-12D has a single embedded Intel D-1718T SoC with 4 Cores / 8 Threads @ 2.6Ghz and can support up to 256GB memory (ECC & Non-ECC) using 4 x RDIMM slots. This doubles the memory capacity from the current E200-8D and is on par with the existing E300-9Din terms of memory. To run all your homelab needs, I definitely recommend a minimum of 128GB of memory but if you can go up higher, definitely a worthy upgrade.

UPDATE (04/20/23) - It was recently brought to my attention from a reader that Supermicro officially recommends using Innodesk memory for E302-12D as it has proper contacts to help dispense the heat from the system. If you use other types of memory, it is very possible the system can overheat as it does not have a way to dispense the heat properly and will cause the system to shutdown. If using the recommended memory is not possible, you may want to consider the non-fanless version of this system which is the E300-12D which uses traditional fans.

Network

The networking on the E302-12D is also quite plentiful and with Ice Lake D, the networking has also gotten a major upgrade with a pair of 25GbE SFP28 interfaces. A dedicated 1GbE interface is also available for out-of-band management (IPMI) and below are the full networking details.

- 1 x 1GbE for IPMI

- 2 x Intel I210 1GbE (8086:1533)

- 2 x Intel I350 1GbE (8086:1521)

- 2 x Intel E823-L SFP28 25GbE (8086:124d)

Storage

The storage on the E302-12D has had a slight upgrade and can support both a single M.2 2280 (NVMe) and a single M.2 2242 (SATA) out of the box. Although this may not sound too impressive, the E302-12D is actually quite flexible and can support a number of storage configurations based on your needs whether that is multiple VMFS datastores to also using vSAN.

- SATA port (orange)

- While you can install and run ESXi on a USB device, it is certainly recommended to use a more reliable storage medium like an SSD. A SATADOM (SuperDOM) can easily be added to the E302-12D to provide an additional storage device, which is also perfect for running ESXi. This is also the same configuration that I am using with my E200-8D

- PCIe slot (4.0 x16)

- An inexpensive PCIe-to-M.2 NVMe adapter can be used to provide additional storage supporting a single M.2 (2280) NVMe device. This is also the same configuration that I am using with my E200-8D

- The Supermicro AOC-SLG3-2M2 (Add-On-Card) can be used to provide additional storage supporting two additional M.2 (2280) NVMe devices

- For larger storage capacity needs, instead of using the Supermicro M.2 AOC, a single 2.5" SATA drive can also be installed using a full-height, half-length (FHHL) adapter

With the additional storage expandability, users can have up to 5 storage devices: M.2 2280 (NVMe) from motherboard, M.2 2242 (SATA) from motherboard, SATADOM from motherboard, 2 x M.2 2280 (NVMe) from PCIe slot.

UPDATE (06/20/23) - A removable drive tray accessory from Supermicro can also be used with E302-12D, for more details, please refer to this blog post HERE.

ESXi

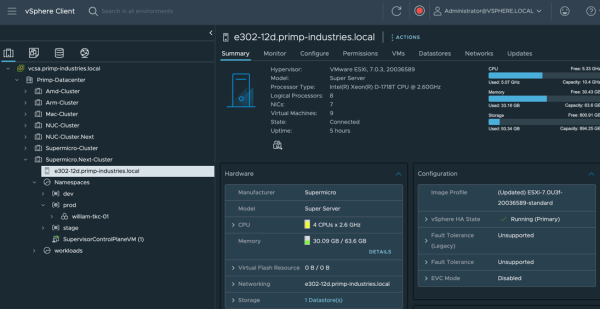

Last and most important question is does the new E302-12D run ESXi? You can probably guess based on the picture above 😀

Yes, ESXi installs flawlessly on the new Ice Lake D platform with the help from the latest ESXi 7.0 Update 3f release which includes an update to the icen (Ice Lake) native driver that is needed for ESXi to recognize the two new 25GbE SFP28 adapters. Earlier releases of ESXi can also be installed, however only the Intel I210 and I350 adapters will be recognized by ESXi and it is recommended that you use at least ESXi 7.0 Update 3f or newer. For those interested in E300-12D should also have no issues running ESXi as long as you are using 7.0 Update 3f or newer.

Note: While the two 25GbE SFP28 adapters is currently not listed on the VMware's HCL, you can also take a look here to see other supported Ice Lake D-based network adapters for those interested.

Note: A USB-based Remote Network Driver Interface Specification (RNDIS) interface is also available within the E302-12D and can be passthrough to VM if needed.

For my storage setup, I used vSAN configured with both an M.2 NVMe and M.2 SATA. I had also configured vSphere with Tanzu and deployed some Tanzu Kubernetes Clusters (TKC) workloads and everything came up quick. For those interested in using a vTPM, the E302-12D also includes the latest TPM chip and functions as expected.

My overall impression of the new E302-12D is that it does not disappoint and it being completely silent was a HUGE plus for me. In fact, when I had powered down my E200-8D, it felt weird not to hear anything 😉 For those interested in purchasing either the E300-12D or E302-12D, I have been told general availability should be sometime in Q3 of 2022.

Nice hardware. I'd be more interested in the 8 core version but sadly enough both are outside my budget at € 1350 and € 2100. If you can get them that is... You write GA should be Q3 2023. Is that a typo ?

Thanks for catch Tom, it should be Q3 this year (2022)

hi william,

thanks a lot, it would be great if you could provide some links with approx prices for the E300-12D or E302-12D servers .

You’ll need to inquire that based on your available vendors and locations

Thanks 👍

You had me at fanless 🙂

Hopefully you mean Q3 2022! Do we know if it'll be possible to purchase the motherboard itself (X12SDV-8C-SP6F)? For upgrading my homelab I'd like to continue using my rackmount 2U cases, need to check if my cases have the FlexATX form factor. I'm also interested in using this platform for a 2-node vsan cluster, is it possible to direct connect the 25gb interfaces to each other crossed over without requiring the use of a 25gb networking fabric? I can move the witness traffic to the 1gb interface. Thanks William, great post!

Corrected, it should be Q3 of this year (2022). Simliar to 10GbE, I'd expect you do not need 25GbE capable switch and can indeed use direct connect

You likely just need a 25Gb rated DAC for the cheapest possible Direct Connect.

I can't find anything on Intel's site to confirm, but guessing the 823 chipset would still support a 10Gb connection as well using whatever cables you already have.

The specs all say that it will take an FHHL pcie card. But the dimensions of the box looks like it should take something a little longer as it is quoted at 226mm in depth. What is the actual inside dimensions of the unit. I have a card that is 202.65mm long that I am looking at. HL is generally considered to be only 167.65mm.

I just measured the inside and giving a slight buffer which came out to 190 mm

Thanks. That means the 6xM.2 (NGSFF) will only fit without it's fans which I am afraid will overheat. https://www.amfeltec.com/pci-express-gen-3-carrier-board-for-6-m2-or-ngsff-nf1-pcie-ssd-modules/ But their 2xM.2 (NGSFF) will fit with fans. https://www.amfeltec.com/pci-express-gen-3-carrier-board-for-2-m-2-or-ngfss-nf1-pcie-ssd-modules/. For those that don't know. NGSFF is 30.5mm wide instead of 22mm like most M.2 SSDs. This allows larger capacities like the SAMSUNG PM983 at 15.6TB. Thanks again for the rapid reply.

Hey William - great post! Do you know if the 25gb card will be able to support RDMA vSAN? I was only find references to iWARP support for that card, but no RoCE?

Hey William, thanks for sharing your experience. This is a must buy for me. I'm curious if you have a distributor you go through for Supermicro gear? It's tough to find!

The article and screenshot says 4c/8t, but the specs in the supermicro links say 302=8c/16t and 300=10c/20t. Is this early prototype using a smaller processor or is the SM site wrong?

One thing for sure, that 4c/8t stuff is getting 'really' old; in a world of Ryzen 5950x (16c/32t). Which could be had TODAY for $479.99 (microcenter); down from it's original price of $800.00

we 'are' talking about virtual servers, i.e., you need some CPU resources in the box.

I'm just saying.

Why thermal pad film is jot removed?

Hmm this could be the successor of my nuc7i7. Sadly I don't find any CPU benchmarks.

I am just a bit concerned about its heat dissipation. Is it OK to run 7x24 with the passive cooling? My sys e300-8d often goes up to 80C under light load

For anyone who is interested in this machine:

I've bought and tested one E302-12d-8c with 256GB RAM (64x4). In idle state, the temperature of dimm zone will reach high critical value within 3~5 hours, and poweroff for self-protection is triggered. Finally the sales told me only innodisk memory is tested ...

So it is just unusable for homelab unless you have a rack with proper cooling equipment

Hi Lin,

Thanks for sharing. I had just shared your comment/concern w/the Supermicro folks and they responded that the issue is that the Xeon's do get warm and without property heatsinks on the memory, you can see these wild temperature changes, which they mentioned they may have already shared with you as the solution to the issue as this case sounded familiar to them.

So their recommendations, which I will update in the blog post is to use Innodisk memory as it has the proper contact with heatsink and is able to dispense heat properly not causing system to overheat. If you can not use this type of memory, then you may want to consider the non-fanless version of the system

Hi William, do you know if you can run VSAN using SATA ahci driver? Meaning the SATA port straight from the motherboard and not going through any HBA controller. I know ever since the beginning of VSAN it has been not supported due to queue depth numbers. But for home lab use case will it give me a hard-stop/hard-error or just a warning and let me continue?

Try it and see 🙂

How does it handle ESXI 8.0?

I am ready to refresh my home lab and these boxes look ideal. Has anyone installed ESXi 8.0 update 1a?