It can be pretty difficult to find an AMD-based small form factor (SFF) kit that is compatible with recent ESXi releases. The large majority of these AMD SFF kits all use Realtek for their onboard network adaptor and Realtek does not have a driver for ESXi, nor have they expressed any interests in creating one.

This is also quite unfortunate, as there have been some pretty interesting AMD kits that have been released in the market, but they can not be fully utilized due to the network driver challenges. Prior to the holiday break, fellow VMware colleague Hilko Lantinga, who works in our End User Computing (EUC) division had reached out about a new AMD kit that he had just acquired and has been very happy with after putting it through its paces, especially from a power efficiency standpoint for his updated VMware Homelab.

The system is from a vendor called ChangWang, which I was not familiar with and the AMD kit is the CW56-58, which is based on an AMD Zen 3 architecture (7nm) and what stands out immediately is that this system includes four built-in network adaptors (hint: they are all compatible with latest ESXi 8.0 release)! I asked if he would be willing to sharing his thorough review with the community as this could be a really interesting system and below is Hilko's detailed write-up in his own words.

The following content below is a contribution from Hilko Lantinga.

Background

With the current EU energy crisis (plus the upcoming CO2 tax) and to remove some heat and noise, I’ve been thinking about getting a small power efficient device that can run the eight VMs I always want to be on. I’ve got two big dual AMD EPYC Milan hosts with loads of NVMe disks and GPUs and a SMB 10GbE switch. Ideally the system would have 8-16 Zen3 cores (so I can vMotion), 64-128GB of RAM and 2-4 2.5-10GbE ESXi 8 compatible NICs.

When I saw the CW56-58, my interest was sparked, could this be my ultimate noise/heat/power saver that would put my large hosts in standby 90% of the time?

The CW56-68 can be purchased on AliExpress from Topton, who is a reseller they ship to both US/EMEA.

Compute

The CW56-58 comes with two AMD Cezanne U (Zen 3, Ryzen 5000 Mobile) and a Barcelo refresh CPU options:

| CPU | AMD Ryzen™ 5 5600U | AMD Ryzen™ 7 5800U | AMD Ryzen™ 7 5825U |

| Cores | 6 | 8 | 8 |

| Threads | 12 | 16 | 16 |

| Base Clock | 2.3GHz | 1.9GHz | 2GHz |

| Max Boost | 4.2GHz | 4.4GHz | 4.5GHz |

| L2 Cache | 3MB | 4MB | 4MB |

| L3 Cache | 16MB | 16MB | 16MB |

| Graphic Cores | 7 | 8 | 8 |

| Graphics Frequency | 1800MHz | 2000MHz | 2000MHz |

I opted for the Barcelo refresh 5825U as the price difference was slim. What is quite interesting about these CPUs is that they consist of a single Core Chiplet Die (CCD), so for the 5825U all eight cores share a single L3 cache. This means that when you create an eight vCPU VM all the threads can work together instead of having two groups of four.

sched-stats -t ncpus

16 PCPUs

8 cores

1 LLCs

1 packages

1 NUMA nodes

Note: Recommended VM setting would be to have the cores per socket equal to the number of vCPUs. The CPUs have 16 usable lanes of PCIe 3.0.

System

The case feels a lot sturdier than pictures show, it feels quite luxurious, everything is thick and heavy with all-aluminum, almost like the better fanless cases as if every side is a heatsink. The case is 16.8cm (6.6”) wide, 13.17cm (5.2”) deep and 6.3cm (2.5”) heigh including the 3mm (0.12”) feet.

The front of the case has the power button, CMOS reset pinhole, one full-featured Type-C USB interface with data/display/reverse charging, two Type A USB 2.0 and two Type-A USB 3.1 ports as well as a Realtek RT5170 SD card reader. It’s a bit weird that the native USB 3.2 Gen 2 from the CPU doesn’t seem to be used, the Type-A ports might be coming from the chipset. The latest BIOS even has a UEFI module for booting from the card reader. While I don’t recommend using a SD card for booting ESXi, there is nothing wrong with installing from SD card.

The top of the case has an intake to what ChangWang calls a coppertube turbine, a blower style fan. The CPU is on the back of the motherboard, and it is mounted upside down in the case.

What is a nice detail is that all fan openings have a mesh like tape acting as a dust filer.

The rear of the unit has an HDMI and DisplayPort as well as 4 x RJ45 Intel I226-V 2.5GbE ports, a DC input and a screw for a ground lead.

The bottom of the case has rubber feet and a brushless PWM 80mm fan, the RPM signal isn’t used (only 3 of the 4 pins connected), the system uses the RPM signal of the blower style fan instead.

The fan could optionally be replaced for a 2.5” SATA drive.

Screws and a cable for this are included.

I wouldn’t recommend replacing the fan with a drive as it provides well needed airflow to the top side of the motherboard.

Talking about cooling, I strongly recommend replacing the thermal paste of the blower style cooler. ChangWang used Xinyue 7921, but it was absolutely rubbish even when new. Swapping with Noctua NT-H1 (would recommend NT-H2, but had none left) made a 12 degrees C difference, and over time the difference would probably become larger and larger. You can remove the cooler by removing the four black screws on the motherboard, two between the M.2 drives and two under the SODIMMs, the four metal-colored screws can be used to remove the motherboard. For extra cooling it would be possible to put 1mm thick thermal stickers between the blowerfan and top of the case.

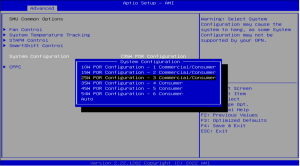

The BIOS allows you to choose (Advanced->AMD CBS->NBIO->SMU->System Configuration) between 10/15(default)/25/35 Watt operation, apparently older BIOS versions even had 45W and 54W, but that was removed.

I can imagine why, with the default thermal paste even 35W operation isn’t sustainable, I had to cancel my test when the CPU hit 99 degrees C and was still climbing rapidly, as I did not want to test what happens at the maximum operating temperature of 105 degrees C of the CPU.

With the new thermal paste all modes worked fine, even for an hour of CPU torture.

| BIOS Setting | 10W | 15W (def) | 25W | 35W |

| Short CPU Power Limit | 18W | 30W | 48W | 60W |

| Total System Power Short | 39W | 55W | 84W | 120W |

| All Core Turbo Short | 2.7GHz | 3.1GHz | 3.8GHz | 4GHz |

| Short Time | 10s | 3.5m | 5m | 8m |

| Short Temp | 70° C | 72° C | 76° C | 83° C |

| Long CPU Power Limit | 15W | 25W | 37.5W | 42W |

| Total System Power Long | 33W | 35W | 55W | 80W |

| All Core Turbo Long | 2.4GHz | 2.4GHz | 3.1GHz | 3.7GHz |

| Long Temp | 64° C | 64° C | 67° C | 77° C |

| W11 Idle Power | 10W | 10W | 10W | 10W |

I decided to go for 25 Watt, this gives me a sustained All Core Turbo around the same speed as my dual AMD EPYC 7713 (3.1GHz), 5 minutes short All Core Turbo of 3.8GHz, single core Turbo of 4.5GHz and only 55W during long powerful operations, all while maintaining the same low idle power usage and temperatures I’m comfortable with. It might be more energy efficient during intended operation as 15W as the machine would be able to go back to idle quicker. I would not recommend the 10W option as there hardly are any power savings, nor would I recommend the 35W option, with the default thermal paste it doesn’t work well, plus the peak power usage is above the 90W rating of the (probably not that great) included power supply.

Under ESXi the idle power usage will probably go up with a few Watts and without a monitor attached, the frequency could be slightly higher as there will be more package power left for the CPUs.

The unit is silent when idle, around 45dB when the fan really kicks in and steadies around 40dB, both measured at 50cm distance. Now while this might sound as a reasonably quiet system, it isn’t. The noise has a high pitch to it, which makes it appear a bit louder than it is. Fine for in a fuse/cupboard, but not something I’d like to have on my desk in the office.

While most Chinese manufacturers don’t provide BIOS files, ChangWang actually does, which is good to see. Note that there are two versions of the BIOS, there is one with the reversed order of the NICs. My system came with the latest 0.22 BIOS from the 9th of October 2022 and runs microcode 0x0a50000d, which seems to be the latest AGESA V2 PI 1.2.0.7.

Memory/Storage

I used two Crucial CT32G4SFD832A 32GB DDR4-3200 CL22 DIMMs for a total of 64GB, the maximum of this board. By default, the board sets 1GB of memory aside for graphics. As the ESXi console doesn’t need it and my workload does I changed that to 64MB in the BIOS (Advanced->AMD CBS->NBIO->SMU->GFX Configuration->UMA Frame buffer size). I did a couple of rounds of memtest x86 before installing ESXi, they passed.

The M.2 slots only have screw holes for 2280 drives, so make sure you don’t buy 110 or 60mm drives but get 80mm ones. There was a Black Friday deal on the Samsung 980 Pro 2TB, I opted for two with heatsink as in systems this small NVMe controllers can easily overheat. As you can see in the image it almost doesn’t fit, the top drive is ever so slightly angled around the unused ATX power input. Any heatsink wider or higher, even by a hair, will not fit. The 980 Pro is PCIe 4.0, while the system is 3.0, but PCIe is downwards compatible, and the 4.0 speeds might become useful if upgrading to another system later.

ESXi

Installing ESXi 8.0 is easy as everything in the system is compatible. Secure Boot just works and even for the NICs you no longer need the Community Networking Driver Fling.

The idle usage without VMs in ESXi is 15 Watts, with a single idle VM it is 16 Watt. The sustained boost frequency is 3375MHz with a power usage of 55 Watts and the short boost frequency is 3900MHz with an average power usage of 84 Watts.

The NIC order is equal to the labeling on the case, and the NICs work on both 2.5 and 1GbE switch ports.

What does not work is TPM, because fTPM exposes a CRB interface and not TIS/FIFO as required by ESXi. Disabling TPM in the BIOS (Advanced->Trusted Computing->Security Device Support->Disabled) clears the warnings and error messages. You can still use vTPM without a physical TPM device.

What also doesn’t work is vMotion from AMD EPYC Milan without EVC, as Process-context identifiers (PCID) aren’t available, which is weird as it should be a Zen 3 CPU feature. Either it isn’t available on mobile CPUs or maybe something isn’t correct in the firmware. But with EVC set to Zen 2 (AMD Rome didn’t support PCID) on both hosts vMotion from/to AMD Milan works fine.

![]()

Other than those two things everything is working well. The disk performance inside a VM is amazing with over 360K IOPS random write per SSD.

Conclusion

Both my Milan hosts and the 10GbE switch idle around 700 Watts. Even if I put one host in standby, we are still talking about 400 Watts. With both hosts in standby, the 10GbESMB switch powered off and a consumer 10GbE switch running, the usage is 45 Watts. The average over 24 hours of this unit with 8 mostly idling VMs including vCenter, Active Directory, SQL Server, VMware Horizon, OPNSense, etc. (average total usage is 970MHz) is just 21W! And when I need to benchmark something on customer like equipment or test something with GPUs I can power on or put hosts in standby straight from vCenter, while for minor tasks I can use the CW56-58.

Total power savings per year would be 2600kWh and with the current energy prices, it only takes a couple months to get a full return on my $1000 investment. I could save another 250kWH by powering off IPMI too. Needless to say: I’m keeping it.

Hehe, did exactly the same just a few days before christmas with cheap NV2 nvmes. So Inwas able to replace my APU2 firewall and 2 NUCs AND upgraded to 2.5gbit lan in my network. I also bought a cheap 8port 2.5gbit switch from Alinas well. I am really fine with it, but I left the big cooler in it and on the outside I did connect a 2.5 samsung ssd for the esx8 installation with the given connector-cable. Looks a bit crappy, but the complete box is hidden in my appartment so nobody cares about. The fan sometimes is a bit loud. Maybe I am able to lower it with the thermal paste as I read it in your post

Did you try ECC memory in this system? I'd be curious if it works as many AMD systems support it in an unofficial way.

No, but it could work in theory as the CPU supports it.

There are also settings for ECC RAM in the BIOS.

How's the partitionclayout?

Is ESXi installed one one of the two Samsung drives and takes the whole space or you can partition it?

Sorry for the noob question but I can't find an answer to this.

https://williamlam.com/2020/05/changing-the-default-size-of-the-esx-osdata-volume-in-esxi-7-0.html

Nice review.

it goes to my wish-list in case my old esxi v6 (unable to upgrade) with 8 machines become worse as it is working without any problems.

In my case i use an out-of-the-box usb pen drive to boot esx.

Have you tested external drives via USB3?

This is working without a problem, but asks for a real disk for the logs during installation.

Bought the 5800U box recently. Just want to ask is there some way to check host temperature from ESXi8? Not sure where to find the IPMI driver....

There is no IPMI support on the motherboard, and the ESXi monitoring display suggests that you need to load an IPMI driver. openIPMI is a Linux kernel driver, and ipmitools provides the command line tools, but I can't see a version of openIPMI for ESXi 8.

Strangely wiki page for your CPU (5825u) TDP is 15W, how did you run it at 35W?

You can change TDP in BIOS like written above:

The BIOS allows you to choose (Advanced->AMD CBS->NBIO->SMU->System Configuration) between 10/15(default)/25/35 Watt operation, apparently older BIOS versions even had 45W and 54W, but that was removed.

Any ideas what the connectors on the motherboard are for? There's one for a ribbon cable, a gpio header, the 2 sata ports, 4 pin atx, and a mysterious 3 pin header.

do you know does this model support Samsung 32GB DDR4 2666MHz RAM ?

Is there any way to use the APU to pass through to VMs? I am trying to use this to pass through to my linux plex server.

tried this and gave up with plex. working fine with jellyfin btw (only hdr is not working for 4k with it.)

replaced the thermal paste on that thing, going from 100% CPU with 100°C to 60° bonus... fan is not spinning up!

I bought myself first 5825u, then 5600U. Awesome computers. The second came with a newer bios which is not on the site. (CW56-58 0.22 x64 12/12/2022 09:15:23). I leaked this BIOS and wrote it to myself in 5825u. The flight is normal.

I just got mine and installed an NVMe SSD and 2 32GB RAM. When I plug the power cord, the power button goes blue and fans run. But then it turns off after 2 seconds.

I tried switching the memories, plugging out an NVMe, turning it on without any USBs plugged in, and what not. Same problem happens all the time.

Does anyone know what's going on with this machine?

I had the same thing from the very beginning, but after some time of torment, I just reset the bios with the button on the front panel and everything went smoothly

@Viper, could you please share the updated 0.22 BIOS from December? I am having some issues with the BIOS from October (RAM settings not working). Kr

https://drive.google.com/drive/folders/1po8EpufA9L3mu4T0gckoOqgjScAShi36?usp=sharing

CW56-58-2022-10-09.rom - download from my 5825u

CW56-58-2022-10-09.rom - download from www

CW56-58-2022-12-12.rom - download from 5600u

Can anyone get ECC ram to work with this board?

There are a number of settings about ECC in the BIOS (0.22).

But when i run Memtest86 it reports

"ECC Enabled: No"

and

"ECC Polling: Disabled"

I tried contacting Changwang about ECC support for this board but the only answer i got was:

"Hello AMD is supported ECC"

The 5800G is a Cezanne APU which does not support ECC on the non-PRO versions. The 5825U is a Barcelo core which does seem to support ECC.

See:

https://www.techpowerup.com/cpu-specs/ryzen-7-5825u.c2521

https://www.techpowerup.com/cpu-specs/ryzen-7-5800u.c2746

@Davd

I have the 5825U version

I was thinking about breaking out the 3rd m.2 slot for pci-e, and putting an x710 NIC on it. Does there happen to be an SR-IOV setting in the BIOS? Anyone try this?

Hey I also have 5800U unit! replaced thermal paste down to 63ºC!! I wanted to ask.. did you were able to passtrough the amdgpu integrated to VM? I was able to extract vbios from BIOS update, something needed to passtrough, I can share if you want, I can use it to transcode in the VM but black screen.

What about SR-IOV? can I share my gpu between multiple VM's?

@Isaac could you please share the extracted vbios? I would very much appreciate that.

Installed EsXi 8.0, but NICs (DHCP) aren't comming up. No network connectivity. Do I have to adjust a setting in the bios(0.22)?

problem solved. Was a router issue

@Viper, how to flash the new .rom file in 5825u? I tried mod the iso, but it don't works and I can't figure it out. Thanks!

Hello, are the 2 x "TB M2 Samsung in Mirror ?

No, they're independent drives.

one of my 5800u died yesterday, benchmarking ontap select on 980 pro with stock thermal paste at 25w bios config.

nics leds blink but cant power on

I got three of these nodes but I cannot do vSAN on them. The manufacturer claims support for 3 x NVMEs, but one of them is not usable (unless I leave one of the side panels open).

Hey, great write up! I ran into a similar issue with EVC on the Ryzen 5950x desktop parts - in that vcenter told me it was not Zen 3 compatible and I had to end up choosing Zen 2 EVC mode. Unfortunate, but glad to see its not just me and that other people are experiencing the same thing with other parts.

The board has 2 sata connectors and you also shared the pinout but I'm unable to find such cable or connector. Anyone found a seller?

https://www.gorite.com/intel-nuc-internal-fpc-fcc-22-pin-sata-power-cable-for-2-5-inch-drives

could it be this?

That's a different one as it has 22 pins not 12.

Got a reply from the seller I used, offering them for 15$ each excluding shipping. But that is a bit to much for my use case.

Who you talked with? Topton store on aliexpress? Could you share your contact? I really need one, mine is fully attached with storage, could need 4tbs extra 🙂